Inductor] C++ compile error when using torch.nn.functional.pad · Issue #96484 · pytorch/pytorch · GitHub

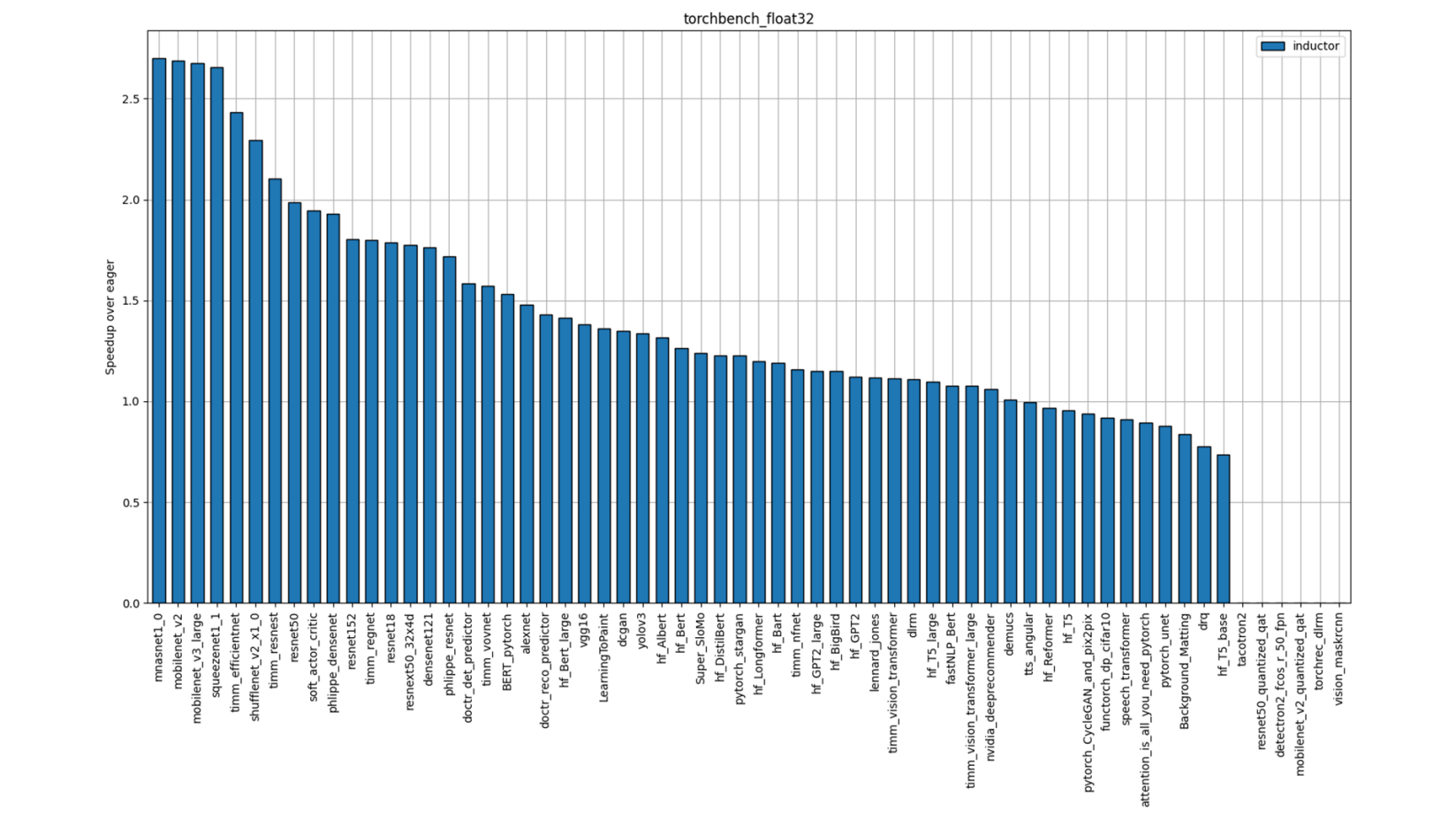

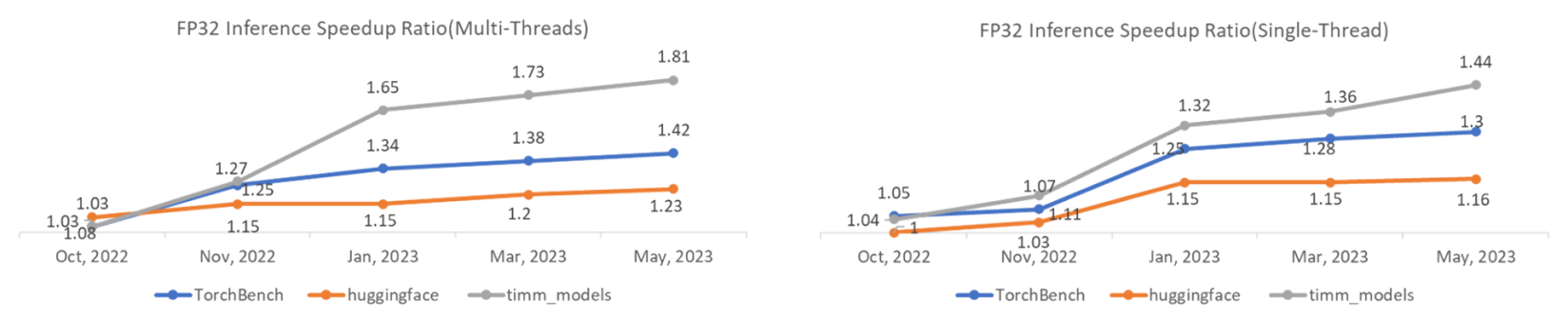

TorchInductor: a PyTorch-native Compiler with Define-by-Run IR and Symbolic Shapes - compiler - PyTorch Dev Discussions

Manufacturers Directly Replace The Flame Cutting Torch Heating Tool Rusty Screws and Nuts Quick Remover - China Releasing Hardware Inductor, Portable Mini Inductor | Made-in-China.com

Make a Replacement Inductor Coil Fix a Cree LED UltraOK ZS-2 Flashlight and Mod : 3 Steps (with Pictures) - Instructables

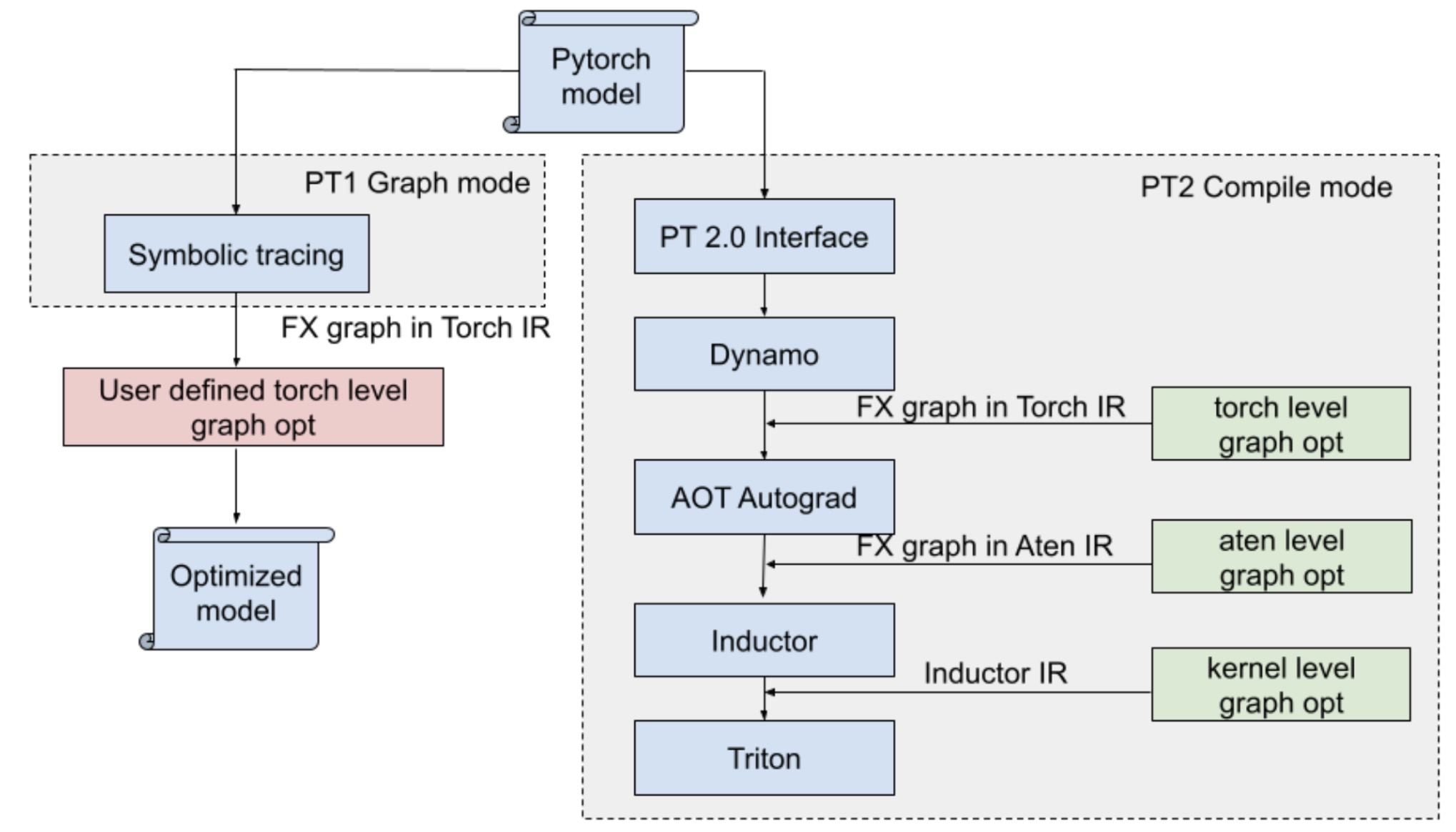

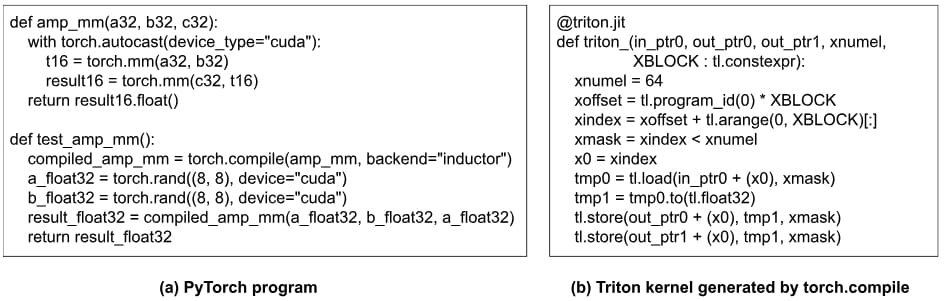

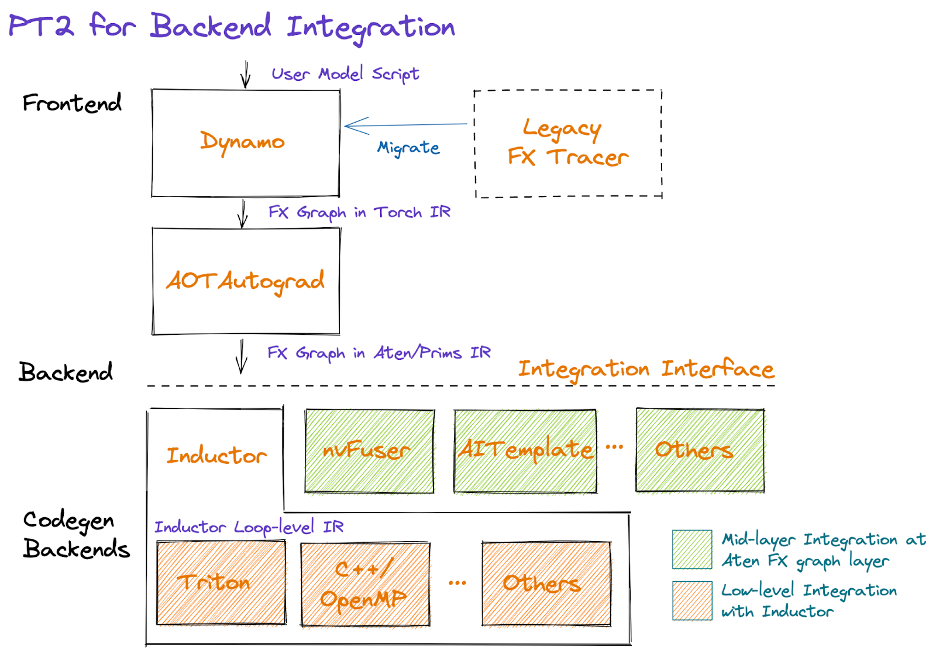

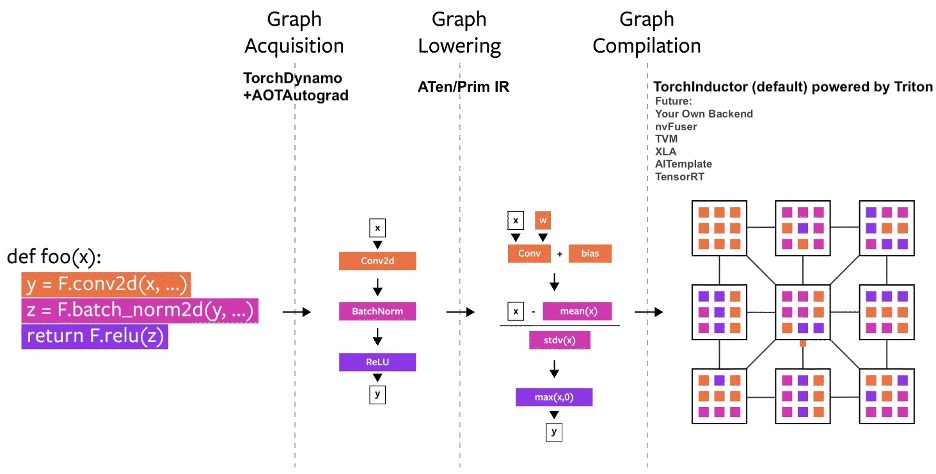

How Pytorch 2.0 Accelerates Deep Learning with Operator Fusion and CPU/GPU Code-Generation | by Shashank Prasanna | Towards Data Science

Torch2 CPU] torch._inductor.ir: [WARNING] Using FallbackKernel: aten.cumsum · Issue #93495 · pytorch/pytorch · GitHub

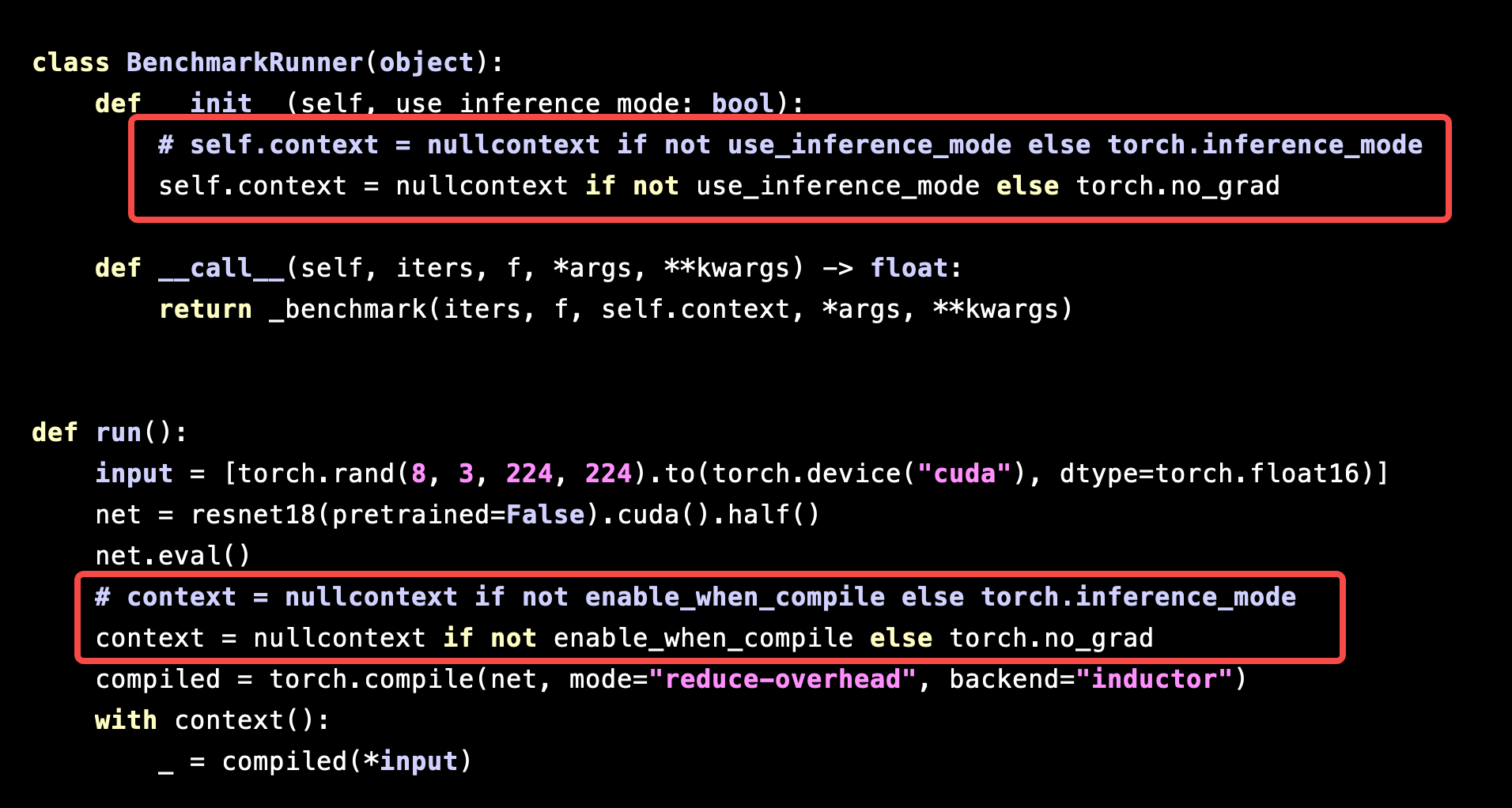

Performance of `torch.compile` is significantly slowed down under `torch.inference_mode` - torch.compile - PyTorch Forums

Mark Saroufim on X: "On the subject of codegen I also wanna plug from torch.utils.cpp_extension import load_inline pass it a cuda kernel as a string and it'll generate the right build scripts

TorchInductor: a PyTorch-native Compiler with Define-by-Run IR and Symbolic Shapes - compiler - PyTorch Dev Discussions